- TheTip.AI - AI for Business Newsletter

- Posts

- Anthropic just flipped the script on Claude model pricing

Anthropic just flipped the script on Claude model pricing

What if Haiku model could think like Opus?

Hi ,

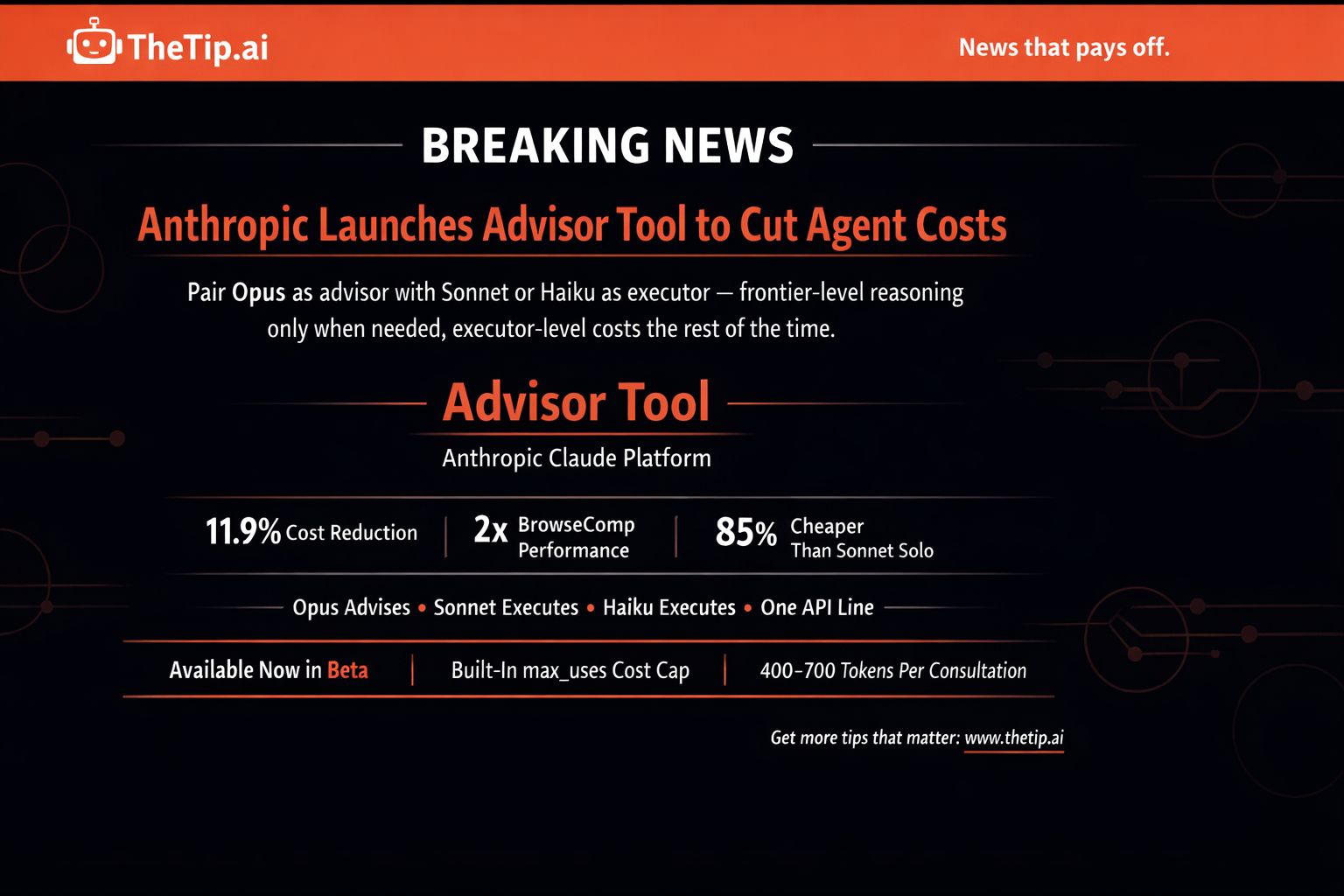

Anthropic just launched the advisor tool on the Claude Platform.

Pair Opus as an advisor with Sonnet or Haiku as the executor. Get near Opus-level intelligence at a fraction of the cost.

Sonnet with Opus advisor scored 2.7 percentage points higher on SWE-bench Multilingual than Sonnet alone. Cost per task dropped 11.9%.

Haiku with Opus advisor more than doubled its BrowseComp score. Still costs 85% less per task than Sonnet solo.

One line change in your API call. Available now in beta.

Today's prompt extracts publishable case studies from every client call you have. Plus why self-healing ad campaigns become the norm by 2027. Then Anthropic's new tool that cuts your AI agent costs without sacrificing intelligence.

🔥 Prompt of the Day 🔥

Case Study Interview Question Bank: Use ChatGPT or Claude

Create one result-extracting client interview system.

"Act as a case study specialist. Create one client interview framework for [SERVICE BUSINESS] that produces publishable success stories.

Essential Details:

Client Results: [OUTCOMES ACHIEVED]

Interview Format: [CALL/WRITTEN]

Duration: [TIME ALLOTTED]

Story Arc Goal: [TRANSFORMATION TYPE]

Metrics Needed: [DATA POINTS]

Usage: [WEBSITE/SALES/ADS]

Create one interview bank including:

Before-state discovery questions

Decision moment questions

Implementation story prompts

Result quantification asks

Emotional impact queries

Recommendation request framing

Extract case study gold every time."

Variables:

SERVICE BUSINESS: What type of service you deliver

OUTCOMES ACHIEVED: What results your clients get

INTERVIEW FORMAT: Call or written responses

STORY ARC GOAL: The transformation you want to highlight

METRICS NEEDED: The specific numbers that prove results

Why This Works:

Bad case studies say "we helped them grow." Good case studies say "revenue increased 43% in 90 days." AI builds the question bank that extracts specific numbers, emotional turning points, and quotable moments. Every interview becomes a sales asset.

🔮 Future Friday 🔮

Self-Healing Ad Campaigns Go Mainstream by 2027

Right now your ads break and you find out after the damage is done.

ROAS drops. CTR tanks. You check the dashboard. Too late.

By 2027 that problem doesn't exist anymore.

Why This Happens

AI marketing tools already handle bidding. Already run A/B tests. Already adjust budgets in real time.

But they react. They don't predict.

The next leap is campaigns that heal themselves before results drop. Not after.

Current State 2026

You set up your campaign. Monitor performance manually. Catch problems when metrics are already red.

Creative fatigue builds quietly. By the time you notice, you've burned budget on a dying ad set.

Audience targeting drifts. You adjust it days later. Competitors already captured the opportunity.

That's the last phase before AI takes the wheel entirely.

What 2027 Looks Like

AI detects creative fatigue 24 to 48 hours before ROAS drops 20%.

New creative variations get tested and swapped automatically. You never see the dip.

Budget shifts happen in seconds across Meta, Google, and TikTok simultaneously.

Audience targeting repairs itself using live customer data. The system knows who's converting before you do.

Full funnel issues get patched without human input. Not just one variable. The entire campaign.

Why It Doesn't Exist Yet

Today's tools optimize individual levers. Bids. Creatives. Audiences. Separately.

No platform in 2026 coordinates all three simultaneously in real time with predictive intelligence.

The data infrastructure, model maturity, and platform integrations needed for full campaign autonomy are still catching up.

2027 is when those three things converge.

What Changes Everything

When AI can predict performance decline before it happens — and patch creative, audience, and budget in one coordinated move — campaign management stops being a daily job.

It becomes a setup-and-monitor function. Not a hands-on discipline.

What This Means

If you run paid ads: The competitive edge in 2027 won't be who manages campaigns best. It'll be whose AI catches problems first.

If you build marketing tools: Predictive self-healing is the feature that separates next-generation platforms from today's dashboards.

If you manage ad budgets: Less wasted spend on underperforming creative. AI patches it before your money is gone.

Self-healing campaigns aren't a feature upgrade. They're a fundamental shift in who's running your marketing.

Did You Know?

Netflix's recommendation system, built on machine learning, is estimated to save the company around a billion dollars a year by reducing subscriber churn — personalising suggestions across hundreds of millions of viewers.

🗞️ Breaking AI News 🗞️

Anthropic Launches Advisor Tool to Cut Agent Costs

Anthropic just introduced a smarter way to run AI agents.

Called the advisor tool. Available now in beta on the Claude Platform.

Pairs Opus as an advisor with Sonnet or Haiku as the executor. Frontier-level reasoning when you need it. Executor-level costs the rest of the time.

One line change in your API call.

The Problem It Solves

Running Opus end-to-end is expensive.

Running Sonnet or Haiku alone misses complex decisions.

Most developers choose one or the other and accept the tradeoff.

The advisor strategy eliminates that choice.

How It Works

Sonnet or Haiku runs the task end-to-end as the executor. Calls tools. Reads results. Iterates toward a solution.

When the executor hits a decision it can't reasonably solve, it consults Opus automatically.

Opus accesses the shared context. Returns a plan, a correction, or a stop signal. Executor resumes.

Advisor never calls tools or produces user-facing output. Only provides guidance when needed.

The Numbers

Sonnet with Opus advisor: 2.7 percentage point improvement on SWE-bench Multilingual over Sonnet alone. Cost per task down 11.9%.

Haiku with Opus advisor on BrowseComp: scored 41.2% — more than double Haiku's solo score of 19.7%.

Haiku with Opus advisor costs 85% less per task than Sonnet solo.

Built-In Cost Controls

Set max_uses to cap advisor calls per request.

Advisor tokens billed at Opus rates. Executor tokens billed at Sonnet or Haiku rates.

Advisor typically generates only 400-700 tokens per consultation. Overall cost stays well below running Opus end-to-end.

Advisor tokens reported separately in usage block for full spend visibility.

Why This Matters

Intelligence and cost have always been a tradeoff in AI agents.

The advisor strategy breaks that tradeoff. Frontier reasoning applies only when the executor needs it. Everything else runs at lower cost.

For developers: Better agent performance at lower cost. No orchestration logic required.

For enterprises: High-volume tasks that previously couldn't justify Opus now can.

For the AI market: Anthropic showing that smarter architecture beats raw model size for cost efficiency.

The Bottom Line

Developers building agents: run your eval suite against Sonnet solo vs Sonnet with Opus advisor. The numbers speak for themselves.

Cost-conscious teams running Haiku: add an Opus advisor and watch performance more than double. Still cheaper than Sonnet solo.

Anyone managing AI spend: built-in cost caps and separate token reporting mean no more billing surprises.

One API change. Smarter agents. Lower bills.

Over to You...

Opus as your advisor. Sonnet doing the work.

Intelligence where you need it. Savings everywhere else.

Are you switching?

To smarter agents for less,

Jeff J. Hunter

Founder, AI Persona Method | TheTip.ai

P.S. Want to turn AI Agents into a consulting offer? Book your AI Certified Consultant strategy 👉 here.

| » NEW: Join the AI Money Group « 🚀 Zero to Product Masterclass - Watch us build a sellable AI product LIVE, then do it yourself 📞 Monthly Group Calls - Live training, Q&A, and strategy sessions with Jeff |

Sent to: {{email}} Jeff J Hunter, 3220 W Monte Vista Ave #105, Turlock, Don't want future emails? |

Reply