- TheTip.AI - AI for Business Newsletter

- Posts

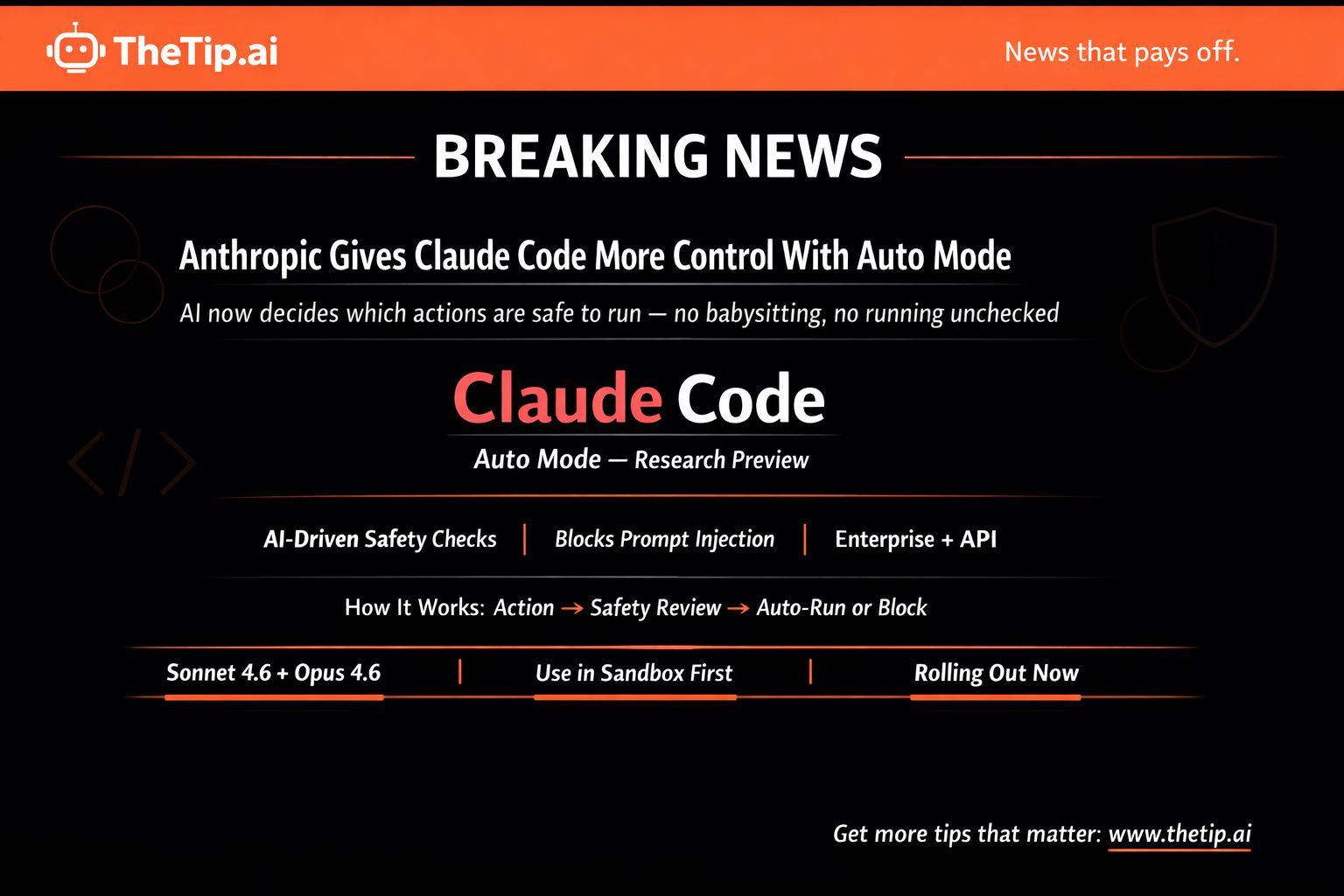

- Anthropic ships Claude auto mode and babysitting is over

Anthropic ships Claude auto mode and babysitting is over

Should you trust AI with coding decisions?

Hi ,

Anthropic just launched auto mode for Claude Code.

AI decides which actions are safe to take on its own.

Uses AI safeguards to review each action before it runs. Checks for risky behavior. Checks for prompt injection attacks.

Safe actions proceed automatically. Risky ones get blocked.

Currently research preview. Enterprise and API users get it in coming days.

Also, OpenAI shutting down Sora after just 6 months. TikTok-like AI video app that never took off.

Today's prompt is about AI anomaly detection that finds hidden friction points killing your conversions. Then why OpenAI's Sora couldn't survive its own creepiness and Anthropic's auto mode that lets Claude Code act without babysitting.

🔥 Prompt of the Day 🔥

AI-Generated User Journey Anomaly Detector: Use ChatGPT or Claude

The Prompt:

"Act as a customer experience detective.

Using AI pattern recognition, create one system for identifying hidden friction points in [CUSTOMER JOURNEY] that kill conversions.

Essential Details:

Journey Complexity: [STEPS TO CONVERSION]

Normal Conversion Rate: [BASELINE %]

Traffic Volume: [MONTHLY USERS]

Device Mix: [MOBILE/DESKTOP/TABLET]

Geographic Variations: [LOCATION DIFFERENCES]

Seasonal Patterns: [TIME-BASED CHANGES]

Create one anomaly detection system including:

Normal behavior pattern mapping

Statistical deviation alerts (15 trigger points)

Cohort analysis automation

A/B testing priority recommendations

Friction point severity scoring

Revenue impact calculator

Find invisible conversion killers."

Variables:

CUSTOMER JOURNEY: Your specific funnel

STEPS TO CONVERSION: How many steps

BASELINE %: Your normal conversion rate

MONTHLY USERS: Traffic volume

DEVICE MIX: Platform breakdown

LOCATION DIFFERENCES: Geographic variations

Why This Works:

Most conversion problems hide in data. AI detects statistical deviations humans miss. Finds where users drop off unexpectedly. Scores friction severity. Calculates revenue impact. Surfaces invisible leaks.

👻OpenAI Shuts Down Sora After Six Months👻

OpenAI announced Tuesday it's shutting down Sora.

TikTok-like social app that launched six months ago.

OpenAI didn't give reason for shutdown. Didn't share when it will officially be discontinued.

What Sora Was

AI-first TikTok. Vertical video feed interface.

Flagship feature "cameos" allowed people to scan faces and make realistic deepfakes of themselves.

These "cameos" could be made public. Anyone could make videos of your "cameo."

Cameo company took OpenAI to court over feature name. Prevailed. Forced OpenAI to change it to "characters."

Why It Failed

Glorified deepfake app was weird as hell.

At launch, Sora felt like under-moderated minefield of creepy Sam Altman videos.

Writer Amanda Silberling: "I will never be the same after watching a realistic clone of the OpenAI CEO walking through a slaughterhouse of fattened pigs and asking, 'Are my piggies enjoying their slop?'"

Moderation Problems

Sora not supposed to allow videos of public figures who didn't explicitly opt-in.

Too easy to evade OpenAI's guardrails.

Deepfakes of Martin Luther King Jr. and Robin Williams emerged. Both their daughters went on Instagram asking users to stop making videos of their deceased fathers.

Users made content using copyrighted characters. Mario smoking weed. Naruto ordering Krabby Patties. Pikachu doing ASMR.

The Disney Deal That Wasn't

Rather than sue, Disney gave OpenAI $1 billion investment and licensing deal.

Would have allowed Sora to generate videos featuring characters from Disney, Marvel, Pixar, Star Wars.

Looked like landmark moment for AI industry.

But with Sora gone, so is deal. No money actually changed hands before it collapsed.

Disney told Hollywood Reporter it would "continue to engage with AI platforms" going forward.

The Numbers

Initial hype was real.

App peaked November with about 3.3 million downloads across iOS and Google Play.

By February, declined to 1.1 million downloads.

ChatGPT has 900 million weekly active users for comparison.

Appfigures estimates Sora made about $2.1 million from in-app purchases in its lifetime.

Users bought more video generation credits.

Why OpenAI Pulled Plug

If app continued to grow, OpenAI would've kept it going. That's not what happened.

App was perhaps too much liability to keep around if it wasn't even growing.

The Threat Remains

Just because Sora is gone doesn't mean threat went with it.

Sora 2 model still available. Tucked behind ChatGPT paywall.

OpenAI hardly alone in making this technology so accessible.

Only matter of time before next social AI video app hits market.

Why This Matters

OpenAI bet on AI-first social network. Market rejected it.

For OpenAI: Six-month failure. $2.1M revenue against massive computing costs. Disney deal collapsed.

For deepfake risk: Social deepfake apps possible but apparently not sustainable. Yet.

For AI video: Underlying Sora 2 model is impressive. Social app wrapper wasn't.

What This Means

If you worried about deepfake social apps: They can fail fast if users don't want them.

If you're OpenAI: Back to ChatGPT and enterprise. Consumer social didn't work.

If you're building AI products: Impressive technology doesn't guarantee product-market fit.

Sora lasted six months. Technology impressive. Product creepy. Market rejected it.

Did You Know?

Google's latest AI models can generate photorealistic videos and images from text descriptions, demonstrating an understanding of real-world physics, human movement, and lighting nuances that would have been science fiction just a couple of years ago.

🗞️ Breaking AI News 🗞️

Anthropic Gives Claude Code More Control With Auto Mode

Anthropic announced latest update to Claude Code.

New "auto mode" now in research preview.

Lets AI decide which actions are safe to take on its own.

The Problem It Solves

For developers using AI, "vibe coding" right now comes down to babysitting every action or risking letting model run unchecked.

Anthropic says auto mode aims to eliminate that choice.

How Auto Mode Works

Uses AI safeguards to review each action before it runs.

Checks for risky behavior user didn't request.

Checks for prompt injection. Type of attack where malicious instructions are hidden in content AI is processing. Causes it to take unintended actions.

Any safe actions proceed automatically. Risky ones get blocked.

What This Builds On

Extension of Claude Code's existing "dangerously-skip-permissions" command.

That command hands all decision-making to AI with no safety layer.

Auto mode adds safety layer on top.

Industry Context

Feature builds on wave of autonomous coding tools from companies like GitHub and OpenAI. Can execute tasks on developer's behalf.

But takes it step further. Shifts decision of when to ask for permission from user to AI itself.

What's Not Clear

Anthropic hasn't detailed specific criteria its safety layer uses to distinguish safe actions from risky ones.

Something developers will likely want to understand better before adopting feature widely.

TechCrunch reached out to company for more information.

Recent Launches

Auto mode comes after Anthropic's launch of:

Claude Code Review: Automatic code reviewer designed to catch bugs before they hit codebase.

Dispatch for Cowork: Allows users to send tasks to AI agents to handle work on their behalf.

Availability

Auto mode rolling out to Enterprise and API users in coming days.

Currently only works with Claude Sonnet 4.6 and Opus 4.6.

Anthropic recommends using new feature in "isolated environments." Sandboxed setups kept separate from production systems. Limits potential damage if something goes wrong.

Why This Matters

Balancing speed with control: Too many guardrails slows things down. Too few makes systems risky and unpredictable.

Anthropic's auto mode attempts to thread that needle.

For developers: Less babysitting. More automation. With safety layer catching risky actions.

For Anthropic: Differentiates Claude Code by letting AI decide when to ask permission. Not user deciding upfront.

For AI coding market: Shift toward AI making safety decisions, not just executing commands.

What This Means

If you use Claude Code: Auto mode removes choice between babysitting or running unchecked. AI decides what's safe.

If you're developer: Vibe coding gets safer. AI reviews actions before executing.

If you're enterprise: Auto mode available in coming days. Test in isolated environments first.

Coding agents becoming more autonomous. With AI-driven safety checks.

Over to You...

Does AI-checked safety beat constant manual approval for your coding workflow?

Hit reply with share your take.

To workflow efficiency,

Jeff J. Hunter

Founder, AI Persona Method | TheTip.ai

P.S. Want to turn AI Agents into a consulting offer? Book your AI Certified Consultant strategy 👉 here.

| » NEW: Join the AI Money Group « 🚀 Zero to Product Masterclass - Watch us build a sellable AI product LIVE, then do it yourself 📞 Monthly Group Calls - Live training, Q&A, and strategy sessions with Jeff |

Sent to: {{email}} Jeff J Hunter, 3220 W Monte Vista Ave #105, Turlock, Don't want future emails? |

Reply